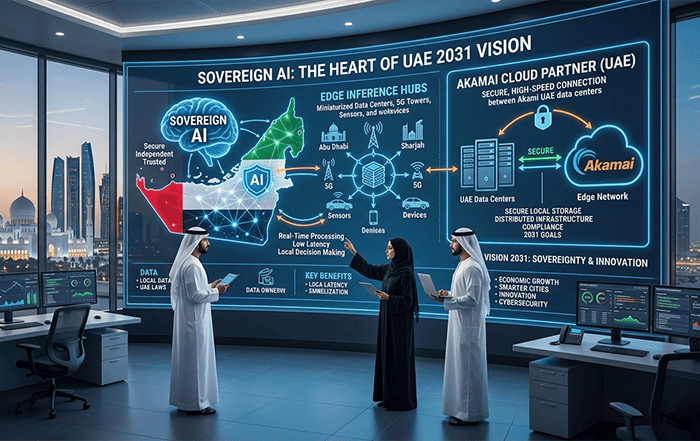

Sovereign AI is the only path to 2031 compliance for government and healthcare entities in the region. The UAE National Strategy for Artificial Intelligence 2031 mandates a shift from overseas LLM processing to local infrastructure to prevent data leakage and ensure national security. This post examines why edge inference, supported by an Akamai cloud partner UAE, is the structural requirement for this transition.

The structural shift to sovereign AI

Using offshore LLM APIs puts organizations into a dangerous compliance vacuum. Current monitoring systems prioritize metrics like latency and accuracy, but fail to report on the data residency paths of individual queries. This lack of transparency leads to data moving across borders in direct violation of the UAE Data Office’s requirement for explicit jurisdictional control.

Sending patient or citizen data to overseas engines creates a “sovereignty blind spot” where 78% of an AI’s strategic layers, including model weights and logs, remain exposed to foreign jurisdictions. This vulnerability directly contradicts Federal Decree Law No. 45 of 2021 (Personal Data Protection Law), which restricts cross-border data transfers to jurisdictions that do not meet UAE adequacy requirements. This compliance gap results in a failed audit for the “UAI Seal of Approval,” which halts the deployment of AI services in regulated sectors like healthcare and finance.

Moving to a sovereign architecture makes sure that all AI inference happens under UAE jurisdiction. It gives the accountability needed for high-impact government initiatives. By localizing the model execution layer, entities can guarantee that sensitive data from defense plans to population records never leaves territorial borders. This shift allows for the development of “homegrown” AI technology that contributes directly to the national goal of generating AED 335 billion in economic growth by 2031.

| Performance Metric | Centralized Cloud AI | Sovereign Edge AI (Akamai) |

|---|---|---|

| Data Residency | Offshore / Multiple Jurisdictions | 100% Inside UAE Borders |

| Typical Latency | 200ms – 500ms+ | Under 50ms (at the edge) |

| Throughput | Limited by backhaul capacity | 3x improvement in throughput |

| Compliance Layer | Foreign Laws (e.g., US CLOUD Act) | UAE Federal Decree Law No. 45 |

| Infrastructure | Large Core Data Centers | 4,200+ Distributed Points of Presence |

| Model Control | Vendor-locked API | Full control over local model weights |

Standard AI workflows depend on round-trip communication to core data centers, creating “sluggish” responses that fail in mission-critical scenarios like real-time diagnostics. At times, a few hundred milliseconds of delay is acceptable for a marketing chatbot, but it is catastrophic for autonomous transportation or robotics, where split-second safety decisions are required. Most legacy cloud systems are not built for this “agentic” speed.

In healthcare specifically, a 2.5x latency delay in interpreting retinal images or cardiac data can mean the difference between preventive intervention and acute care. When inference happens thousands of miles away, the network jitter alone makes human-like responsiveness impossible for surgical robots or emergency dispatch AI. This delay erodes the user engagement required to meet the 34% annual AI growth rate targeted by UAE healthcare providers.

Deploying Akamai cloud computing resources directly at the edge resolves these performance bottlenecks by putting NVIDIA Blackwell AI infrastructure closer to where data is created. This architecture allows for a “Streaming Inference” model, where agentic AI can perform multiple sequential tasks—detecting fraud or optimizing a transaction—without waiting for core data center responses. This mechanic delivers the instant engagement required for the next generation of intelligent public services.

To achieve these speed gains, teams must rethink the underlying hardware distribution.

The mechanics of edge inference

Edge inference decentralizes the AI lifecycle, routing lightweight “routine” tasks to local NVIDIA NIM microservices while reserving sophisticated reasoning for centralized “AI factories”. This distributed approach uses Akamai’s 4,200 points of presence to ensure that if a request is made in Dubai or Abu Dhabi, the decisioning logic executes at the closest possible network hop.

Securing the 2031 vision via local partnerships

Relying solely on global cloud hyperscalers often leads to “egress traps” and unexpectedly high costs for Arabic native workloads. Many organizations find that while training a model is a one-time expense, the cost of running millions of inference calls through proprietary foreign APIs becomes a strategic dead end. This financial friction hinders the UAE’s goal of having 20% of non-oil GDP come from AI by 2031.

When there’s a localized strategy, trust in public AI systems erodes because users experience inconsistent performance and there are data privacy concerns. A lack of domestic routing controls means that even “local” requests may be routed through international exchanges to reach a global provider’s nearest hub. This creates unnecessary exposure to foreign surveillance and jurisdiction, such as the U.S. CLOUD Act, which can compromise national sovereignty.

Engaging with an Akamai partner in the region provides the specialized knowledge needed to navigate these sovereign requirements while optimizing for performance. Dealing with a professional will assist businesses in deploying NVIDIA RTX PRO Servers and BlueField DPUs locally. This step gaurantees that high-performance computing is available without the overhead of building massive, centralized data centers. Such a collaborative model allows government entities to scale securely, meeting the “Stargate UAE” vision of a world-class AI hub at home.

The shift toward localized processing is supported by a robust ecosystem of regional expertise.

The UAE edge infrastructure ecosystem

The local UAE partners now assist with the integration of “Falcon” LLM and other Arabic-native models into the edge environment. Benefits? Cultural and linguistic relevance. This ecosystem includes the development of proof-of-concept projects in predictive tourism and diagnostic tools that are fully compliant with the UAE Charter for the Development and Use of AI.

Operationalizing the 2026 edge ai roi calculator

To manage these transitions, leadership must move beyond vague “efficiency” goals and use concrete behaviors to measure success. We recommend using the 2026 Edge AI ROI Calculator to determine the actual value of moving workloads to the edge.

“Sovereignty is no longer a constraint; it is the driver of digital competitiveness for nations that own their AI future.”

This tool operationalizes the “Inference-to-Value Ratio” by measuring:

- Latency Reduction (ms): The exact improvement in response time compared to offshore APIs.

- Compliance Cost-Avoidance: The potential fines and legal fees saved by meeting Federal Decree Law No. 45.

- Throughput Gain: The increase in the number of concurrent users supported by the 3x throughput capacity of the Akamai partner network.

By focusing on these observable actions, UAE leaders can ensure their AI initiatives are the structural pillars of the 2031 Vision.

Ready to audit your data residency? Moving from centralized cloud to sovereign edge is a structural transition. If you want to see how your current AI infrastructure maps against the 2031 Vision, reach out to Codelattice at askus@codelattice.com for a free architectural consultation.